The value of ε is continuously decayed after every episode to allow the agent first to spend most of its time exploring all the possibilities, while later switching to more exploitation of optimal policies. This change stabilized the performance of the agent, getting rid of the continuous divergence on most scenarios.įinally, on every step, the agent follows an ε-greedy approach to select a random action with probability ε and the best action learned by the network with probability 1 - ε. To get around this problem, the initial setup was modified and two networks were implemented one network -referred as the Q network in the source code of the implementation- is continuously updated, while a second network -designated as the Q-target network- was used to predict the target value and only updated from the weights of the Q-target network after every episode. As mentioned in (Lillicrap et al., 2015), directly implementing Q-learning with neural networks is unstable in many environments when the same network that's continuously updated is also used to calculate the target values. In most of the experiments the agent wasn't able to surpass very low rewards, while in others the agent was able to obtain very high rewards for a bit, but quickly diverged out of control back to low rewards. This setup, albeit very simple, didn't allow the agent to learn how to land consistently. The activation function between the layers is a rectifier (ReLU), and there's no activation function for the output layer. The experience gained by the agent while acting in the environment was saved in a memory buffer, and a small batch of observations from this list was randomly selected and then used as the input to train the weights of the DNN -a process called "Experience Replay." The network uses an Adam optimizer, and its structure consists on an input layer with a node for each one of the 8 dimensions of the state space, a hidden layer with 32 neurons, and an output layer mapping each one of the 4 possible actions for the lander to take. I used TensorFlow for the implementation of the DNN. Instead, a DQN uses a Deep Neural Network (DNN) for approximating the Q*(s, a) function getting around the limitation of the standard Q-learning algorithm for infinite state spaces. The Lunar Lander environment has an infinite state space across 8 continuous dimensions, which makes the application of standard Q-learning not possible unless the space is discretized -which is inefficient and not practical for this problem. Below I'm exploring the different decisions to construct a successful implementation, how some of the hyperparameters were selected, and the overall results obtained by the trained agent. This implementation is inspired in the DQN described in (Mnih et al., 2015) where it was used to solve classic Atari 2600 games. I'm using an implementation of a Deep Q-Network (DQN) to solve the Lunar Lander environment.

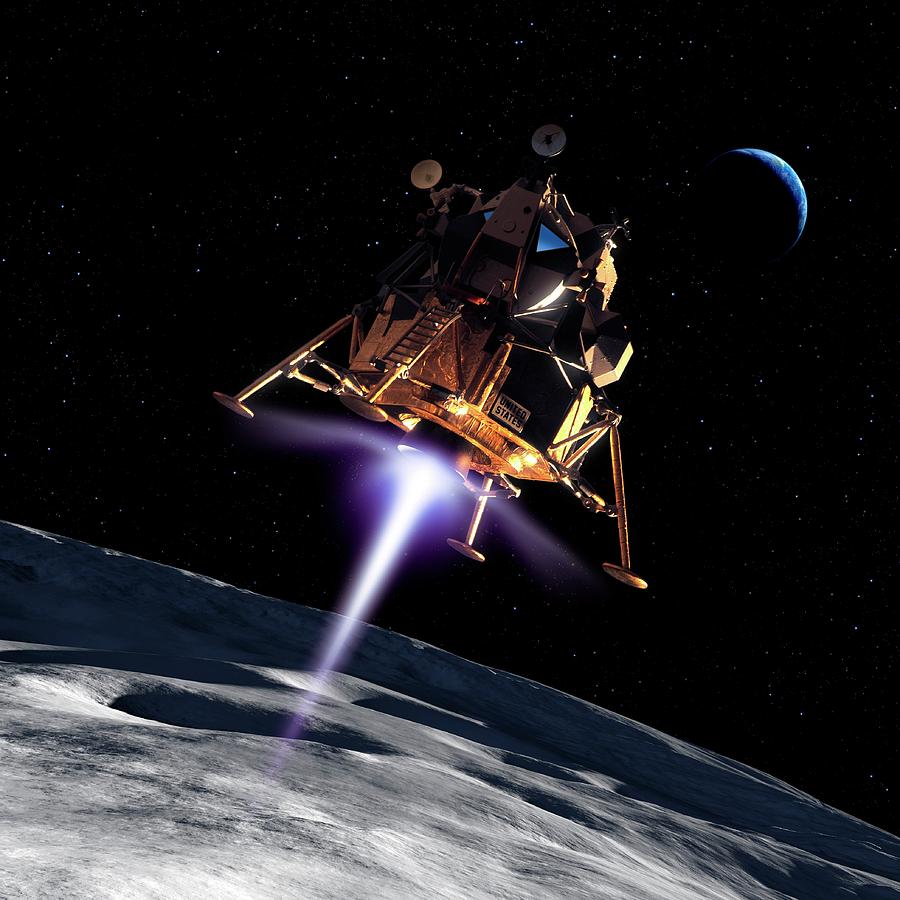

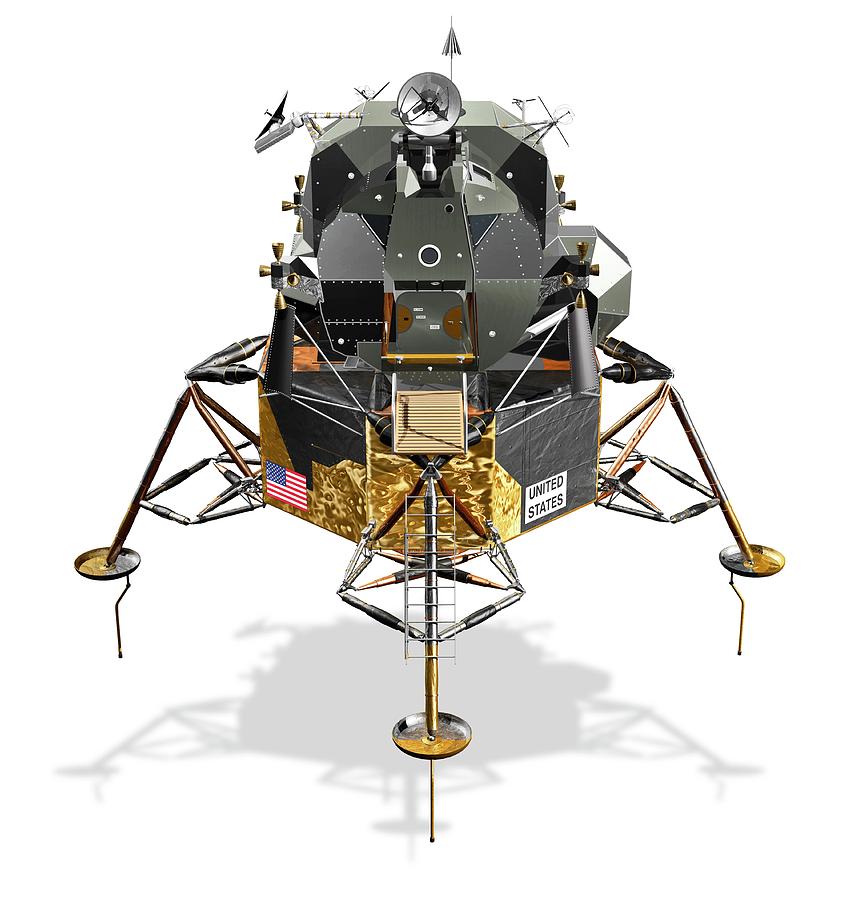

A successfully trained agent should be able to achieve a score equal to or above 200 on average over 100 consecutive runs. This environment consists of a lander that, by learning how to control 4 different actions, has to land safely on a landing pad with both legs touching the ground. Here is an implementation of a reinforcement learning agent that solves the OpenAI Gym’s Lunar Lander environment. Whatever it takes to make things run better. flag, ran a few simple scientific tests and spoke with President Richard Nixon (1913-94) via Houston.Agent = Agent(training, LEARNING_RATE, DISCOUNT_FACTOR, EPSILON_DECAY)įeel free to change these values or include your own. READ MORE: Apollo 11 Moon Landing Timeline: From Liftoff to SplashdownĪldrin joined him on the moon's surface 19 minutes later, and together they took photographs of the terrain, planted a U.S. As he made his way down the module's ladder, a television camera attached to the craft recorded his progress and beamed the signal back to Earth, where hundreds of millions watched in great anticipation.Īt 10:56 p.m., as Armstrong stepped off the ladder and planted his foot on the moon’s powdery surface, he spoke his famous quote, which he later contended was slightly garbled by his microphone and meant to be "that's one small step for a man, one giant leap for mankind." Armstrong immediately radioed to Mission Control in Houston, Texas, a now-famous message: "The Eagle has landed."Īt 10:39 p.m., five hours ahead of the original schedule, Armstrong opened the hatch of the lunar module. the craft touched down on the southwestern edge of the Sea of Tranquility. Two hours later, the Eagle began its descent to the lunar surface, and at 4:17 p.m.

The next day, at 1:46 p.m., the lunar module Eagle, manned by Armstrong and Aldrin, separated from the command module, where Collins remained. After traveling 240,000 miles in 76 hours, Apollo 11 entered into a lunar orbit on July 19.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed